Monki.ai: Designing an AI Companion for Children’s Learning Through Story

Project Snapshot

An LLM-powered dialogic reading coach that helps K–8 learners initiate and sustain meaningful reading conversations, with scaffolds from task decoding to revision and built-in guardrails.

Users & context: K–8 students and teachers in classroom settings, where starting a conversation and sustaining reflective dialogue are common friction points.

Outcome: Three DBR cycles; 312 students; 9,600+ reading logs, 624 student surveys, and 186 teacher reports; observed pre/post gains in lexical diversity and narrative-level comprehension.

Core Design Challenge: How might we design an AI reading companion that scaffolds productive struggle and builds comprehension confidence for K–8 students, while ensuring safety and alignment with classroom norms?

Primary Research Question: What scaffolding structures in an AI dialogue system most effectively support the transition from task decoding to narrative understanding in young readers?

My Role & Scope

Role: Product & Learning Research Lead (Human-AI interaction + evaluation)

I collaborated closely with curriculum designers, teachers (as co-designers), and engineering teams to ensure pedagogical alignment, technical feasibility, and a seamless classroom integration.

I owned:

Framing learning goals and “friction moments” (starting, sustaining, and deepening dialogue)

Designing conversation scaffolds and multi-step prompting (task decoding → drafting → revision)

Defining guardrails and disclosure aligned to classroom use

Planning instrumentation and analyzing mixed evidence (logs, surveys, teacher reports) to drive iteration priorities

Deliverables: conversation flow diagrams, prompt scaffolds, guardrail specs, pilot protocols, pre/post measures, iteration notes, evaluation summaries

Not my scope (if applicable): core model training and infrastructure

Create — Idea & Hypothesis

Early pilots showed a consistent pattern: many children could not smoothly initiate a conversation, stayed in “confusion” without progressing to comprehension, and disengaged due to academic emotions. I reframed the problem from “make the AI more interactive” to “design a progression that helps learners move from uncertainty to meaning.”

Hypothesis: If we scaffold dialogue in small, low-barrier steps—entry prompts, guided questioning, and revision cues—learners can engage in productive struggle and build comprehension more reliably.

Design — Research & Evidence

I conducted thematic analysis on teacher reports to identify recurring friction points, performed correlation analysis between log patterns and survey responses to validate engagement metrics, and used descriptive statistics to quantify pre/post changes in key learning indicators.

To understand where breakdowns occurred, I combined classroom co-design and pilots with mixed-methods data collection. We gathered:

9,600+ reading interaction logs

624 student surveys

186 teacher reports

I synthesized these signals into “failure moments” (e.g., getting started, losing the thread, shallow responses) and translated them into concrete interaction requirements.

Design — Insights → Decisions

Key decisions included:

Conversation entry points: provide low-friction openers and examples to help learners start without fear of being “wrong.”

Step-by-step scaffolding: guide learners from decoding to elaboration to revision, rather than jumping directly to “analysis.”

Safety and misuse guardrails: add boundaries and disclosure appropriate for classroom use and teacher expectations.

Insight 1: Students often hesitated to start because they feared giving a “wrong” first answer.

Decision: Designed low-friction, example-based conversation openers to reduce initial anxiety.Insight 2: Logs showed conversations often stalled after a single exchange, lacking depth.

Decision: Implemented a step-by-step scaffold (decode → elaborate → revise) to structure progressive dialogue.

Iterate — DBR Cycles (3 rounds)

Across three DBR cycles, each iteration followed the same loop: evidence → design change → re-test.

Cycle 1: baseline experience revealed initiation and persistence problems → added structured openers and next-step cues.

Cycle 2: teacher feedback showed emotional friction and inconsistent progression → refined tone, pacing, and feedback prompts

Cycle 3: learning signals suggested where scaffolds helped vs. over-guided → adjusted autonomy, revision support, and guardrails accordingly.

Impact

We observed significant pre/post gains in lexical diversity and narrative-level comprehension, alongside improved conversational flow during classroom use.

Beyond metrics, this project underscored for me the importance of designing for emotional alongside cognitive load in learning technologies. It also raised new questions about long-term adaptation of AI scaffolds—how might the system fade support as learner competence grows?—a topic I am eager to explore further in academic settings.

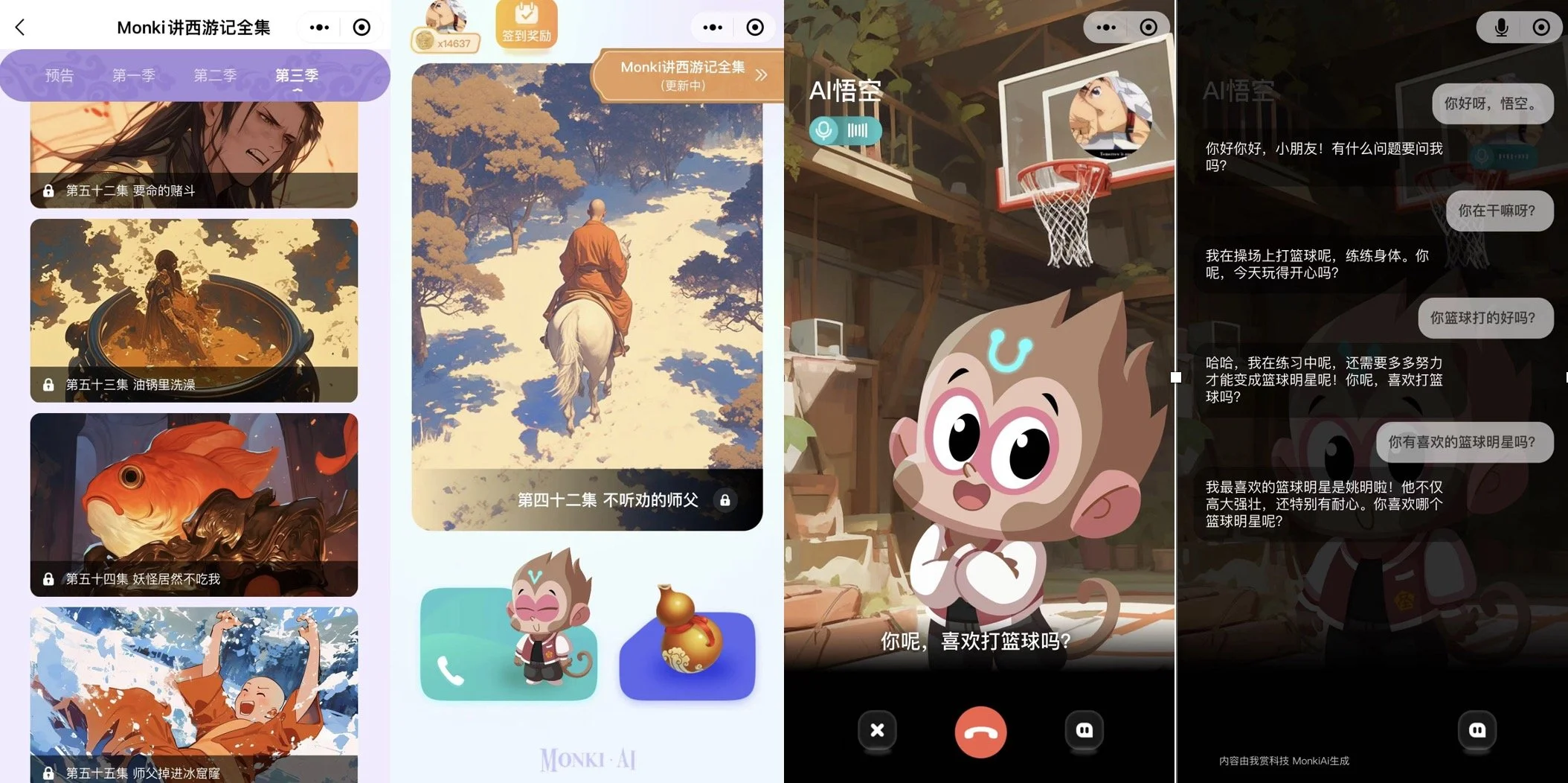

Trailer for Monki

Manual for monki.ai

Real Time interaction during storytelling

AI Avatar Chatting